Why we created another Kafka client for Node.js

Node.js TSC Member, Principal Engineer at Platformatic, Polyglot Developer. RPG and LARP addicted and nerd on lot more. Surrounded by lovely chubby cats.

Apache Kafka has become a cornerstone for building real-time data pipelines and streaming applications, specifically for those enterprises operating in the Fintech and Media industries, where usage spikes are more than common.

If you’re a Node.js developer or manager, integrating Kafka into your projects traditionally meant choosing between two primary libraries:

KafkaJS, a pure JavaScript implementation, or

Node-rdkafka, a performant C++ wrapper around Confluent's battle-tested librdkafka.

Unfortunately, kafkajs is no longer maintained, and the last release was over two years ago. The client was based on a complex consumer API which consisted in starting a consumer and then passing a callback. The callback would be then invoked with the data and several control functions to modify the consumer behavior. Other than impacting the developer experience, this also impacted performance.

On the other hand, node-rdkafka reveals some compatibility issues with Node.js as it is based on the outdated NAN — not node-addon-api — and it has never supported running inside worker threads - which is often needed to avoid blocking the Node.js event loop. Last but not least, we found the APIs exposed by both clients could make consuming messages more difficult than they needed to be, and saw an opportunity to build a more intuitive developer experience

This left Node.js teams without a modern, production-ready, and performant Kafka client. Once our customers started asking us to solve this problem, we realized it fit in perfectly with our mission to address gaps in the Node.js ecosystem for enterprise developers.

So today, we are proud to announce our Kafka library for Node: @platformatic/kafka.

Designed with modern Node.js development in mind, this new driver aims to offer a balance of performance, developer experience, native TypeScript support, and ease of integration.

In this article, we’ll reveal everything you need to know about @platformatic/kafka, its design goals, and a head-to-head comparison with both kafkajs and node-rdkafka.

So whether you're evaluating Kafka clients for a new project or considering a migration, this comparison will help you make an informed decision.

Rethinking the Kafka developer experience in Node.js

The three libraries exhibit a deeply different development experience. This is partially due to the different ages of the projects and, in node-rdkafka’s case, also due to the structure of the underlying C++ library.

In the following walk-throughs, we’re going to analyze the production and consumption of data. In all three cases, the Admin API has a similar developer experience.

Producer API

kafkajs has a Promise-based API, which makes it pretty easy to produce messages to a topic.

import { Kafka } from 'kafkajs'

const client = new Kafka({ clientId: 'id', brokers: ['localhost:9092'] })

const producer = client.producer()

await producer.connect()

await producer.send({

topic: 'topic'

messages: [

{ key: 'key', value: 'value', headers: { a: '123', b: '456' } }], acks: 0 })

]

})

console.log('The message has been delivered')

await producer.disconnect()

However, there is a quirk here: kafkajs does not directly support serialization other than strings, so the user must manage it manually.

Meanwhile, node-rdkafka exhibits a different approach to the problem, which makes the developer experience more cumbersome. As the messages are not delivered immediately but stored in a local queue, the user must listen to an optional delivery-report event, which must be explicitly enabled in the constructor.

import RDKafka from 'node-rdkafka'

const producer = new RDKafka.Producer(

{ 'client.id': 'id', 'metadata.broker.list': 'localhost:9092', dr_cb: true },

{ acks: 0 }

)

producer.on('delivery-report', () => {

console.log('The message has been delivered')

producer.disconnect()

})

producer.connect({}, () => {

producer.setPollInterval(1)

producer.produce(

'topic', 0, Buffer.from('value'), 'key', -1, null, [{ a: '123', b: '456' }]

)

})

In this case, the message components are not wrapped in an object which makes it easier to identify them. They also pass an opaque value (the null in the code above), which pushes the headers at the end of the parameters’ list.

Note that, as outlined in the benchmark section above, the queue approach requires continuous polling of the producer (or using setPollInterval) to receive delivery reports, which delays delivery confirmation.

With @platformatic/kafka, we wanted take what worked with kafkaja and see if we could simplify the developer experience even further by removing the need to create a Client, and then a Producer. Then we added support for both a promise-based and a callback-based APIs.

For us, the real game changer for the developer experience was implementing direct support for serialization via the serializers option.

With the serializers option, the user can provide a different serializer for each message component: key, value, header key, and header value. These serializers are set once in the constructor and don’t need to be provided on each produce call.

import { Producer, ProduceAcks, stringSerializers } from '@platformatic/kafka'

const producer = new Producer({

clientId: 'id',

bootstrapBrokers: 'localhost:9092',

serializers: stringSerializers

})

await producer.send({

messages: [

{ key: 'key', value: 'value', headers: { a: '123', b: '456' } }], acks: 0 })

],

acks: ProduceAcks.NO_RESPONSE

})

console.log('The message has been delivered')

await producer.disconnect()

Consumer API

kafkajs has a unique way of consuming messages. The consumer has a run method, which requires the user to provide an eachMessage or eachBatch callback, which are invoked every time there is a new message. The callback’s arguments contain the message and other methods that used to control the consumer.

import { Kafka } from 'kafkajs'

const client = new Kafka({ clientId: 'id', brokers: ['localhost:9092'] })

const consumer = client.consumer({ groupId: 'group' })

await consumer.connect()

await consumer.subscribe({ topics: ['topic'] })

await consumer.run({

async eachMessage ({ message}) {

console.log('Received message', message)

return consumer.disconnect()

}

})

As with producing, the received message’s component will be a buffer and the user must manually deserialize it.

node-rdkafka supports two consuming methods: manual or stream. In the first case, the user invokes the consume method after subscribing to events.

import RDKafka from 'node-rdkafka'

const consumer = new RDKafka.KafkaConsumer({

'client.id': 'id', 'group.id': 'group', 'metadata.broker.list': 'localhost:9092'

})

consumer.on('data', () => {

console.log('Received message', message)

// SetTimeout is need to avoid a crash

setTimeout(() => {

consumer.disconnect()

}, 100)

})

consumer.on('ready', () => {

consumer.subscribe(['topic'])

consumer.consume()

})

consumer.connect()

Alternatively, node-rdkafka supports creating a standard Node.js readable stream.

import RDKafka from 'node-rdkafka'

const stream = RDKafka.KafkaConsumer.createReadStream(

{ 'client.id': 'id', 'group.id': 'group', 'metadata.broker.list': 'localhost:9092' },

{},

{ topics: ['topic'], objectMode: true }

)

// Since streams are AsyncIterable, both following approaches are possible

stream.on('data', () => {

console.log('Received message', message)

stream.destroy()

})

// The stream will be implicitly destroy after exiting the loop

for await (const message of stream) {

console.log('Received message', message)

break

}

@platformatic/kafka only supports streams, which simplifies the architecture and allows users to choose between a callback or promises-based approach. Unlike the other two libraries, the deserializers option can be passed when building a stream (or in the consumer’s constructor) to abstract the need for manually managing deserialization.

import { Consumer, stringDeserializers } from '@platformatic/kafka'

const consumer = new Consumer({

clientId: 'id', group: 'group',

bootstrapBrokers: 'localhost:9092', serializers: stringSerializers

})

const stream = await consumer.consume({ topics: ['topic'] })

// Since streams are AsyncIterable, both following approaches are possible

stream.on('data', message => {

console.log('Received message', message)

stream.close(true)

})

// The stream will be implicitly destroyed after exiting the loop

for await (const message of stream) {

console.log('Received message', message)

break

}

Our goal with @platformatic/kafka was to take the best of both worlds and merge them into a single, intuitive developer experience. We then extended the client's capabilities with support for handling serialization/ deserialization and complete type support for produced and consumed messages, as shown in the code below:

import { Consumer, stringDeserializer, jsonDeserializer } from '@platformatic/kafka'

type Person {

name: string

address: string

}

const consumer = new Consumer({

clientId: 'id', group: 'group',

bootstrapBrokers: 'localhost:9092',

serializers: {

key: stringDeserializer,

value: jsonDeserializer<Person>

}

})

const stream = await consumer.consume({ topics: ['checkins'] })

stream.on('data', message => {

/*

The Typescript compiler will infer that:

- `message.key` is of type `string`

- `message.value` is type `Person`

Therefore the line below will be perfectly valid and autocompletable in the IDE.

*/

console.log('Checkin', message.key, message.value.name, message.value.address)

stream.close(true)

})

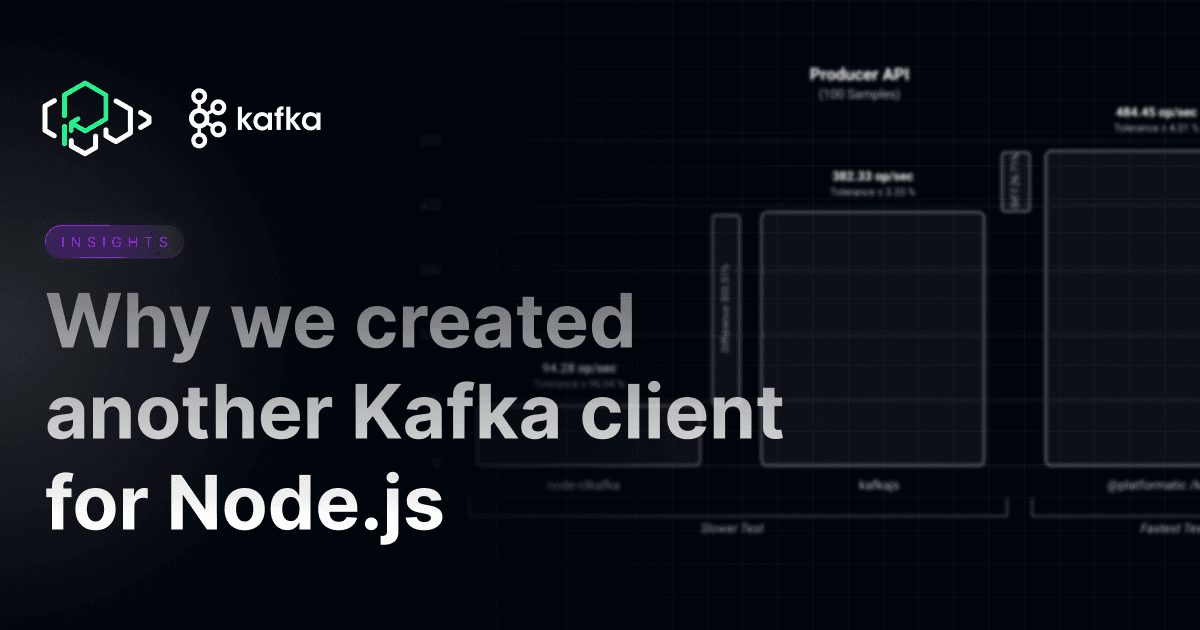

Benchmark comparison

When writing comparative benchmarks for the three libraries mentioned above, the first difficulty we encountered was the impossibility of running node-rdkafka inside Node.js worker threads. Moreover, the library was crashing on client disconnect. After some research into the issue and adapting our benchmarking tool, we were able to overcome all the problems.

We have tested the three libraries in the two most common scenarios: consuming and producing data.

These tests have been run on an Apple MacBook Pro with M2 Max chip running MacOS Sequoia.The target cluster was composed of three brokers running on Docker. All data was produced and consumed for a single topic with three partitions, one per broker.

It’s important to note that the benchmarks we conducted were not aimed at stress testing the machines or the cluster, but rather to stress test a single connection to a broker in order to assess the overhead introduced by the various Kafka libraries. For this reason, the CPU of a single process was not saturated during the benchmarks.

The benchmark code can be found in the @platformatic/kafka repository.

Producer API

╔═════════════════════╤═════════╤═══════════════╤═══════════╤════════════╗

║ Slower tests │ Samples │ Result │ Tolerance │ Difference ║

╟─────────────────────┼─────────┼───────────────┼───────────┼────────────╢

║ node-rdkafka │ 100 │ 94.28 op/sec │ ± 96.04 % │ ║

║ kafkajs │ 100 │ 382.33 op/sec │ ± 3.33 % │ + 305.51 % ║

╟─────────────────────┼─────────┼───────────────┼───────────┼────────────╢

║ Fastest test │ Samples │ Result │ Tolerance │ Difference ║

╟─────────────────────┼─────────┼───────────────┼───────────┼────────────╢

║ @platformatic/kafka │ 100 │ 484.45 op/sec │ ± 4.01 % │ + 26.71 % ║

╚═════════════════════╧═════════╧═══════════════╧═══════════╧════════════╝

@platformatic/kafka is the clear winner here, with around 25% improvement over kafkajs.

This also provided a clear example of the performance implications of node-rdkafka's queue and poll-based mechanism to retrieve delivery reports, which made benchmarking the performance challenging.

Consumer API

╔════════════════════════╤═════════╤═════════════════╤═══════════╤════════════╗

║ Slower tests │ Samples │ Result │ Tolerance │ Difference ║

╟────────────────────────┼─────────┼─────────────────┼───────────┼────────────╢

║ node-rdkafka (stream) │ 10000 │ 9211.38 op/sec │ ± 10.94 % │ ║

║ kafkajs │ 10000 │ 9293.38 op/sec │ ± 1.82 % │ + 0.89 % ║

║ node-rdkafka (evented) │ 10000 │ 9301.78 op/sec │ ± 11.90 % │ + 0.09 % ║

╟────────────────────────┼─────────┼─────────────────┼───────────┼────────────╢

║ Fastest test │ Samples │ Result │ Tolerance │ Difference ║

╟────────────────────────┼─────────┼─────────────────┼───────────┼────────────╢

║ @platformatic/kafka │ 10000 │ 10270.39 op/sec │ ± 0.83 % │ + 10.41 % ║

╚════════════════════════╧═════════╧═════════════════╧═══════════╧════════════╝

Here again, @platformatic/kafka is the winner. By avoiding any unnecessary data copying, we were able to also deserialize the data for this benchmark, while the other two libraries require this as a manual step for the user, which isn't accounted for in this benchmark.

Conclusions

While we take pride in everything we release here, we are particularly proud of what we're putting into the ecosystem today. @platformatic/kafka stands out not only as best-in-class for both producer and consumer benchmarks, but we feel it presents a significant step forward in the developer ergonomics for working with Kafka.

With built-in support for serialization and deserialization, native TypeScript integration, and a streamlined API, @platformatic/kafka emerges as a compelling choice for developers seeking to build robust and maintainable Kafka-based applications in Node.js.

Developers (that's you, if you've made it this far) are why we build what we build, and we'd love to hear what you think of @platformatic/kafka. Join us on Discord or send an us an email (hello@platformatic.dev) with any feedback or questions you have.

Thanks and happy building 🚀