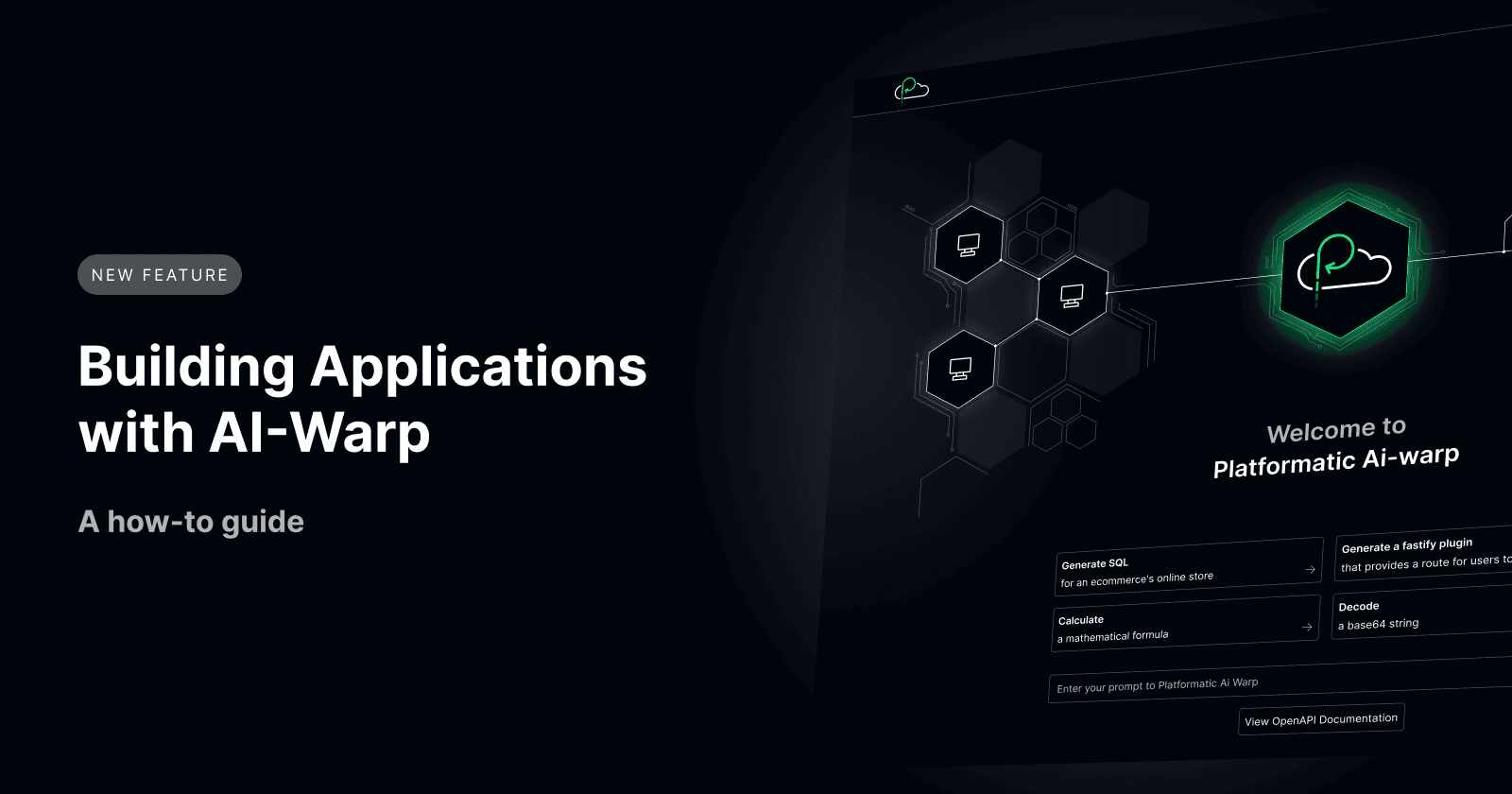

Building AI applications with Platformatic AI-Warp

A Software Engineer and Technical Writer focusing on Developer Relations, Web and Docs Engineering.

Integrating AI into existing traditional workflows has become a new trend with the introduction of powerful AI models from OpenAI, Mistral, Google, and many others. They provide APIs you can use to access their models, and with tools like the Platformatic AI-Warp, you can easily integrate with any model of your choice with just a few steps instead of manually connecting to these models.

In this article, you will learn how to use Platformatic AI-Warp and integrate it with your frontend framework of choice using the Platformatic Frontend Client.

A complete code version is available on GitHub.

Environment Setup

Prerequisites

To follow this tutorial, make sure to have the following prerequisites:

Basic understanding of Platformatic and JavaScript

Node.js LTS version

A frontend framework installed (We will be using Vite + React.js)

Create an AI Warp application

What is AI Warp?

Platformatic AI-Warp is a gateway for AI providers and user services to streamline the integration of different AI Large Language Models (LLMs) and services into your applications from a single Platformatic interface.

AI-Warp is available as a Stackable template within our Stackable Marketplace. It is a unified layer that standardizes interactions between your applications and various AI service providers.

AI-Warp can be configured to your chosen AI service providers, such as OpenAI, Mistral, or Llama, allowing you to send requests uniformly regardless of your AI technology. You can read more on our product launch post.

Running your AI-Warp application

To create and run your AI-Warp application, first, navigate to the GitHub page for AI-Warp and run the application using the steps below:

npx create-platformatic@latest

Select Application, then

@platformatic/ai-warpEnter your ai-app name

Select

@platformatic/ai-warpSelect your AI provider

Enter the model you want to use (use the path in case of local llama2)

Enter the API key if using an online provider (OpenAI, Mistral, Azure)

Run the command to start AI-Warp:

npm start

You will see your AI warp application when you open http://localhost:3042/ in your browser.

NOTE: Check out our guide to running a local model of Llama2 and adding it as an AI provider to yourplatformatic.jsonfile.

Connecting AI-Warp to your Platformatic Client

Integrating AI-Warp with your frontend applications can enhance their capabilities and make them more interactive. Using the Platformatic frontend client, you can interact with AI-Warp via a remote OpenAPI server. This section will teach you how to connect your AI-Warp to your frontend using the Platformatic client.

Creating a client for AI-Warp OpenAPI server

To create a frontend client for a remote OpenAPI server for AI-Warp, run the command below in your project directory:

npx platformatic client --frontend http://0.0.0.0:3042 --name AI

This will create an AI folder in your project directory with three files:

AI-types.d.ts: Contains types and interfaces for your AI warpAI.mjs: the client, a JavaScript implementation of your OpenAPI server, including routes and endpoints from AI warp.AI.openapi.json: A JSON object of your client, including AI warp endpoints.

NOTE: Change the URL and directory name in the command to your application URL and name.*

Next, you need to create a factory object that serves as an instance of your client. This will interact with your AI warp API.

To do this, create a new file in your project directory called index.mjs and add the code block below:

import build from './AI.mjs';

const client = build('http://127.0.0.1:3042') // Your AI warp URL

const res = await client.postApiV1Prompt({

prompt: 'AI works!'

});

console.log(res);

We imported a build function that takes the AI-Warp application's base URL to http://127.0.0.1:3042. We then requested the postApiV1Prompt method, which responded promptly to show that our AI connection worked.

Building a React application

Let’s follow the official Vite documentation to create a React application with the latest version of React. Run this command:

npm create vite@latest

Here, you will be presented with a few options — for the sake of this tutorial:

Project name: …

Ai-frontendPackage name: …

ai-frontendSelect a framework: › React

Select a variant: ›

JavaScript

Once you have created the project, go to the project directory and run the command:

cd Ai-frontend

npm install

npm run dev

Now you’ll see this screen when you open http://localhost:5174/ in your browser:

Building a React application

Adding utility functions to the React app

Create a new file utils.js, in the src folder of our project application, which will house our application's functions.

// src/utils.js

import { useState, useCallback } from 'react';

import { postApiV1Prompt, setBaseUrl } from '../../api/AI.mjs';

setBaseUrl(import.meta.env.VITE_AI_WARP_URL);

We imported the postApiV1Prompt function and the setBaseUrl function from our API utilities module located at ../../api/AI.mjs. Using setBaseUrl, we configure our application to use the base URL specified in our environment variables (import.meta.env.VITE_AI_WARP_URL).

This approach allows us to dynamically set the base URL for API requests, which is particularly useful for handling different environments such as development, testing, and production.

You can set the base URL directly in your application code using setBaseUrl, either by hardcoding or fetching it from an environment configuration file (.env).

Fetching a Prompt

Let’s create some utility functions for fetching a prompt:

// src/utils.js

export const useFetchPrompt = () => {

const [prompt, setPrompt] = useState("");

const [response, setResponse] = useState("");

const [loading, setLoading] = useState(false);

const fetchPrompt = useCallback(async () => {

setLoading(true);

try {

const fetchedPrompt = await postApiV1Prompt({});

setPrompt(fetchedPrompt);

setLoading(false);

} catch (error) {

console.error("Failed to load prompt:", error);

setLoading(false);

}

}, []);

return { prompt, setPrompt, response, setResponse, fetchPrompt, loading };

};

Here, we created the `useFetchPrompt hook function to store our application's prompt, response, and loading status for our AI application.

Handling Prompt Submit

In this section, we will create the function for submitting our prompt in the frontend of our AI application.

// src/utils.js

export const handlePromptSubmit = async (prompt, setResponse) => {

try {

const res = await fetch(

`${import.meta.env.VITE_AI_WARP_URL}/api/v1/stream`,

{

method: "POST",

headers: {

"Content-Type": "application/json",

},

body: JSON.stringify({ prompt }),

}

);

if (!res.ok) {

const errorResponse = await res.json();

setResponse(`Error: ${errorResponse.message} (${res.status})`);

return;

}

const reader = res.body.getReader();

const decoder = new TextDecoder();

let completeResponse = "";

let loading = true;

while (loading) {

const { done, value } = await reader.read();

if (done) break;

const decodedValue = decoder.decode(value, { stream: true });

const lines = decodedValue.split("\n");

for (let i = 0; i < lines.length; i++) {

const line = lines[i];

if (line.startsWith("event: ")) {

const eventType = line.substring("event: ".length);

const dataLine = lines[++i];

if (dataLine.startsWith("data: ")) {

const data = dataLine.substring("data: ".length);

const json = JSON.parse(data);

if (eventType === "content") {

completeResponse += json.response;

} else if (eventType === "error") {

setResponse(`Error: ${json.message} (${json.code})`);

return;

}

}

}

}

}

setResponse(completeResponse);

} catch (error) {

console.error("Failed to fetch data:", error);

setResponse("Failed to fetch data.");

}

};

export const handleKeyDown = (event, handlePromptSubmitCallback) => {

if (event.key === "Enter" && !event.shiftKey) {

event.preventDefault();

handlePromptSubmitCallback();

}

};

This function sends a user prompt to the /API/v1/stream of the base URL and handles the response. It validates the response to ensure success; if not, it processes and displays error details directly from the API's response.

It reads and processes the data as streams, parses, and appends the results for a request. We then log any errors during the request and send them to our frontend as a response. Any errors or exceptions during the request or processing phases are caught and logged, and a generic error message is displayed to the user.

The handleKeyDown function checks if the Enter key is pressed without the Shift key. If so, it prevents the default action (e.g., form submission) and triggers the handlePromptSubmitCallback function used to submit data.

Building our React application

Let's start by updating the App.jsx file with our functions and adding a React component:

import { useEffect } from "react";

import "./App.css";

import { useFetchPrompt, handlePromptSubmit, handleKeyDown } from "./utils.js";

function AIWarp() {

const { prompt, setPrompt, response, setResponse, fetchPrompt, loading } =

useFetchPrompt();

useEffect(() => {

fetchPrompt();

}, [fetchPrompt]);

const handlePromptChange = (event) => {

setPrompt(event.target.value);

};

const submit = () => handlePromptSubmit(prompt, setResponse);

return (

<div className="container">

<div className="ai-root">

<div className="label-container">

<label className="label-text" htmlFor="prompt-text">

Ask AI warp:

</label>

</div>

<div className="textarea-container">

<textarea

className="textarea-text"

name="prompt text"

id="prompt-text"

cols="60"

rows="1"

value={prompt}

onChange={handlePromptChange}

onKeyDown={(e) => handleKeyDown(e, submit)}

/>

</div>

<div className="button-container">

<button className="button-text" onClick={submit}>

Prompt

</button>

</div>

<div className="card">

{loading ? (

<p>Loading...</p>

) : (

<p className="card-card">{response}</p>

)}

</div>

</div>

</div>

);

}

export default AIWarp;

The AIWarp component uses the useFetchPrompt hook to manage the state and fetch initial data. We also used a textarea for user input, which updates the prompt state on change and triggers the handlePromptSubmit function on the Enter press or button click. We also added a loading state and then rendered the responses.

Our application should look like this:

Wrapping Up

In conclusion, integrating AI capabilities into your applications using Platformatic AI-Warp and the Platformatic frontend client can significantly enhance user experiences and streamline interactions with AI services.

To improve the interaction, you can extend this application by refining how the responses are handled on the frontend.